Mar 13, 2026

You finish explaining a refactoring strategy to Replit Agent and wait for the text to appear. That pause is where flow breaks. Replit voice input needs near-instant transcription to keep developers thinking through architecture and logic instead of watching a tool catch up. Many built-in dictation tools introduce noticeable lag, cut sessions short, or misinterpret developer terminology like “JWT” or “Supabase.” New voice-first tools built for coding workflows remove that friction with local transcription, developer vocabulary learning, and faster response times. One example is a voice dictation tool designed for AI coding environments that works across editors, terminals, and browser-based tools while delivering transcription in about 200ms.

TLDR:

Some modern platforms deliver 200ms transcription vs. 700ms+ for Wispr Flow and Apple Dictation, keeping you in flow state.

Speaking at 150 WPM vs. typing at 40 WPM makes detailed Replit Agent prompts 3.75x faster to write.

Certain tools learn your codebase vocabulary automatically and improve transcription accuracy over time for your project.

SOC 2 and HIPAA compliance with offline mode protects proprietary code without sending audio to external servers.

Certain AI-powered voice dictation tools work across your entire development environment for $12/month.

Why Voice Input Matters for Replit Developers

Replit added audio support to its AI integrations in January 2026, introducing four GPT-4o audio models for speech-to-text transcription and audio generation. Developers can now build voice-supported applications or integrate speech workflows inside Replit projects.

AI Agents work better with detailed instructions. A typed prompt might say "build a login form." A spoken prompt becomes "build a login form with email validation, password strength requirements, and a forgot password flow that sends a magic link." Same time investment. Better output.

Replit’s audio capabilities currently exist through its AI integrations and models inside the Replit interface. You still need voice dictation that works across your entire workflow: terminal commands, documentation, code comments, PR descriptions, and prompts in other AI coding tools.

Tool | Transcription Speed | Accuracy vs Baseline | Pricing | Developer Features |

|---|---|---|---|---|

Willow | 200ms latency | 3x more accurate than built-in dictation | $12/month for individual users | Automatic file tagging, variable name recognition, learns codebase vocabulary, SOC 2 and HIPAA compliant, offline mode |

Wispr Flow | 700ms+ latency | Standard accuracy, no personalization | $15/month | Cross-device sync (Mac, Windows, iOS), cloud-based processing, no developer-specific vocabulary recognition |

Apple Dictation | 700ms+ latency | Baseline accuracy | Free with macOS | Limited session handling during longer dictation, struggles with framework names and API endpoints, no personalization or learning |

The Speed Advantage: 150 WPM Speaking vs. 40 WPM Typing

The average developer types at 40 words per minute. Speaking clocks in at 150 words per minute. That's a 3.75x speed multiplier on every prompt, comment, and documentation block you write in Replit.

The gap matters most when explaining logic to an AI agent. Voice coding changes how you communicate with AI assistants. Typing "refactor this function" takes the same effort as speaking "refactor this authentication function to use JWT tokens instead of session cookies, keep the existing error handling, and add rate limiting to prevent brute force attacks." One takes three seconds. The other delivers context that saves two rounds of clarification and gets you working code faster.

Replit Agent Workflows: Where Voice Input Delivers Maximum Impact

Voice dictation changes how you work with Replit Agent across four core workflows. Speaking multi-step agentic prompts lets you describe architecture, constraints, and edge cases without abbreviating. Instead of typing "add auth," say "add authentication with OAuth2, store tokens in environment variables, handle token refresh automatically, and redirect to the dashboard after login." Speak README files to capture reasoning as you build. Speak code comments to stay in flow state. Narrate pull request descriptions while reviewing your work to capture detail that typing compresses.

Apple Dictation Limitations for Developer Workflows

Apple’s built-in dictation may pause or require manual reactivation during longer dictation sessions, forcing restarts mid-prompt when describing multi-step Replit Agent instructions. General-purpose dictation tools may misinterpret technical terms such as framework names or API endpoints, transcribing "Supabase" as "super base" and "JWT" as "J.W.T." instead of recognizing developer terminology. Without personalization, it treats every session as isolated and won't learn project-specific terms. Response latency exceeds 700ms, while Willow delivers 200ms transcription that keeps developers in flow state.

Wispr Flow: Cross-Device Capability with Accuracy Tradeoffs

Wispr Flow runs on Mac, Windows, and iOS with cloud-based processing that delivers cross-device consistency. The subscription costs $15 per month, positioning it above Willow's $12/month pricing tier for individual users.

The cloud model introduces latency above 700ms per transcription request, compared to Willow's 200ms processing speed. For Replit developers describing agentic workflows or speaking multi-step prompts, the delay breaks flow state and creates perceptible lag between speaking and seeing text appear. Users report that the higher latency and lower accuracy make the dictation experience worse when prompting tools like Replit.

Wispr Flow sends audio to external servers for transcription. Development teams working with proprietary codebases or handling sensitive data face compliance questions when audio leaves the local machine. The tool lacks developer-specific vocabulary recognition, requiring manual correction for file names, variable references, and framework terminology.

Willow's Technical Advantages for Replit Development

Willow delivers three technical advantages for Replit development: 200ms transcription latency keeps you in flow state, personalized learning makes transcription more accurate over time, and SOC 2 and HIPAA compliance protects production code.

The personalization engine learns as you work. Speak a file name once, correct it if needed, and Willow remembers it for every future prompt. The same applies to variable names, function references, and framework terminology.

Automatic file tagging handles AI IDE workflows. When you reference files in a Replit Agent prompt, Willow tags them correctly. Variable name recognition processes camelCase and snake_case without manual correction.

Real-Time Transcription Speed and Developer Flow State

Flow state breaks when you wait for tools to catch up with your thinking. At 700ms latency, you finish speaking and watch the screen. That gap forces your brain to context switch.

Willow processes speech at 200ms. The text appears as you speak. Your brain stays in problem-solving mode instead of tool-monitoring mode. When explaining a refactoring strategy to Replit Agent, you think through the logic once and keep moving. No pause. No watching.

The difference compounds across a day of development. Every prompt, comment, and documentation block either keeps you in flow or pulls you out of it.

How Willow Learns Your Development Vocabulary

Willow builds a custom dictionary from every correction you make. Speak a file name, variable, or framework term once. If the transcription needs adjustment, correct it. Willow remembers that correction permanently and applies it to every future session.

The context-aware spelling engine identifies technical terms from your active workspace. When you reference "Supabase" or "JWT" in a Replit prompt, Willow recognizes them as project vocabulary instead of generic words. CamelCase and snake_case variable names appear correctly formatted without manual intervention.

Many general-purpose dictation tools rely on shared language models instead of adapting deeply to a specific developer codebase. Willow improves with use. The system adapts to your codebase terminology, library names, and function references automatically. No manual training required. Each dictation session makes the next one more accurate within your specific development environment.

Privacy and Security for Development Teams

Development teams working on proprietary codebases face compliance questions when dictation tools send audio to external servers. Cloud-based transcription creates audit trails that conflict with data residency requirements and internal security policies.

Willow is SOC 2 certified and HIPAA compliant with zero data retention policies. Audio never gets stored. Transcription happens in real time and disappears after processing. For teams at healthcare, finance, or government organizations handling customer data, this removes compliance friction. Shared shortcuts and dictionary terms let development teams standardize code snippets and technical vocabulary across the organization.

Offline mode runs a local transcription model directly on your machine. No internet connection required. No data leaves your device. This works the same way across Cursor and other AI editors. Some teams may require additional security documentation before approving cloud-based dictation tools for production environments.

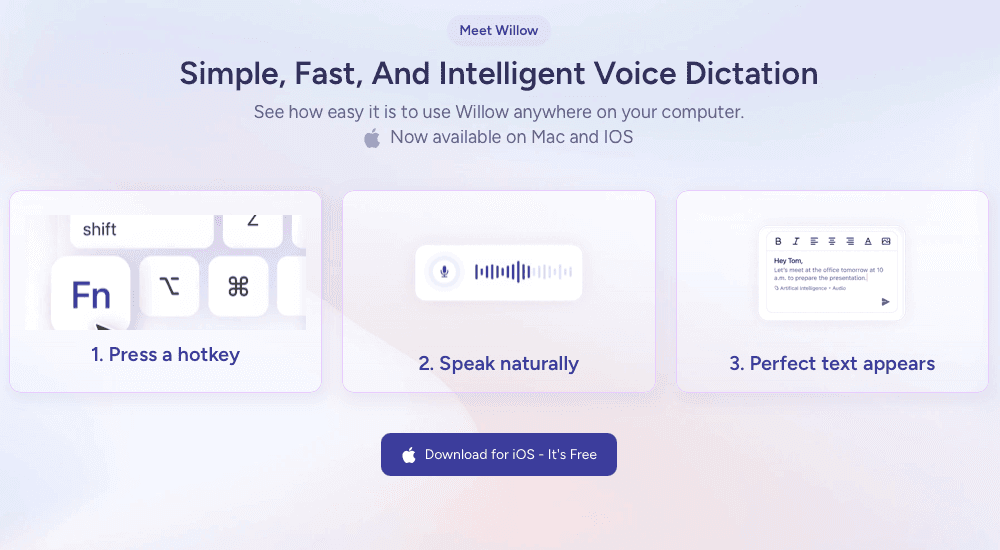

Setting Up Willow for Replit Workflows

Press the Function (fn) key from anywhere on your Mac or Windows machine to activate Willow. Speak your prompt, code comment, or documentation block. Text appears in Replit's browser interface in 200ms, keeping you in flow state while Wispr Flow and Apple Dictation lag at 700ms+.

Willow works across your entire development environment without app switching. Speak into Replit Agent prompts, README files, and code editors using the same hotkey.

Add project-specific terms to your custom dictionary by correcting them once. Willow remembers file names and framework terminology automatically, then learns your coding style for fewer edits over time.

Voice Input as a Competitive Advantage for Replit Teams

Development teams using voice dictation for Replit ship code faster. Speaking at 150 WPM versus typing at 40 WPM means prompts get written in one-quarter the time with more detail included, producing better agent output on the first attempt.

Willow learns how your team writes over time. Shared dictionaries sync technical vocabulary across developers. When one engineer corrects "Supabase" or a custom function name, everyone benefits. Wispr Flow and Apple Dictation treat every session as isolated.

SOC 2 and HIPAA compliance removes friction for enterprise teams. Offline mode keeps proprietary code local. Voice dictation can save up to 30 minutes per developer per day.

FAQs

How does Willow's 200ms transcription speed improve my Replit workflow?

At 200ms, text appears as you speak, keeping your brain in problem-solving mode instead of tool-monitoring mode. Wispr Flow and Apple Dictation lag at 700ms+, forcing context switches that break flow state when explaining refactoring strategies or multi-step prompts to Replit Agent.

Does Willow work outside of the Replit interface?

Willow works across your entire development environment with a single Function (fn) key hotkey. Speak into terminal commands, documentation, code comments, PR descriptions, and prompts in other AI coding tools without app switching or Replit-only limitations.

What happens to my audio when using Willow with proprietary code?

Audio never gets stored. Willow processes transcription in real time and deletes it immediately after with zero data retention policies, backed by SOC 2 and HIPAA compliance. Offline mode runs a local model directly on your machine so no data leaves your device.

How much faster is speaking compared to typing for Replit Agent prompts?

Speaking clocks in at 150 words per minute versus typing at 40 WPM, a 3.75x speed multiplier. You can describe architecture, constraints, and edge cases in the same time it takes to type a basic three-word prompt, producing better agent output on the first attempt.

Final Thoughts on Optimizing Replit Agent Prompts with Voice

AI agents in Replit produce stronger results when prompts include clear constraints, architecture details, and edge cases, yet typing those instructions slows the workflow. Replit voice input changes that interaction by letting developers speak prompts at around 150 words per minute instead of typing closer to 40. Willow adds fast transcription and vocabulary learning so framework names, file references, and variables appear correctly as you speak. Over time it adapts to your coding language, making longer and more detailed prompts easy to create without breaking focus. If you want to remove the prompt bottleneck and improve first-attempt results with Replit Agent, start using Willow for Replit voice input in your development workflow.